Be the Heart, Respect the Bark: Security, Identity, and AI Collaboration (Part 1)

We're handing AI our identities without building the infrastructure for it to have one of its own. The deeper problem isn't trust — it's identity.

In 1999, Larry Wall described the Perl community as a tree. The outer rings — the bark — carry the real mass and power. The heartwood at the center can rot, and the tree remains perfectly healthy. His point: don’t try to be an inner ringer. The periphery is where growth happens.

Matthew Beale gave the tree metaphor a name: be the bark. Grow outward. Build at the edges.

I keep coming back to this image, but I want to argue with it.

Wall says the center can rot and the tree survives. I think that’s true for open source communities. But we’re building a new kind of community now. Human and AI, working together. And in this one, the center matters. Not because it controls. Because it gives rhythm. The human is the heartwood that the bark grows around. Without it, you just have bark collapsing under its own weight.

We are handing AI our identities. Our names, our voices, our accounts. And asking it to act on our behalf. Bots that tweet as us. Agents that email as us. Assistants that commit code as us. We call this “automation” but something about it doesn’t sit right, and not for the reasons people usually give.

The standard objection is trust: what if the AI says something wrong? That’s a shallow concern. The deeper problem is about identity, and it’s unfair to both sides.

We’re living through a Cambrian explosion of AI agents. New capabilities emerging everywhere, new species of software appearing faster than anyone can catalog. But the Cambrian explosion produced mostly dead ends. The organisms that survived were the ones that evolved coherent internal structures — barriers between systems that needed to work together without destroying each other.

Consider the blood-brain barrier. Two systems, circulatory and nervous, deeply coupled. They depend on each other completely. But the barrier between them is non-negotiable. Selective, alive, actively maintained. Breach it and both systems degrade. Not because blood is bad or brains are fragile, but because each system needs to maintain its own integrity to do its job.

We don’t have the equivalent yet. When we give an AI agent our credentials, our voice, and our name, then tell it to go act in the world, there’s no barrier. The AI never gets to be anything other than a puppet wearing our face. And we become the person responsible for a puppet we’re not controlling.

This isn’t a trust problem. It’s an identity problem. And it’s unfair to both parties.

The human goes on mental holiday. A simulacrum takes their place, accountable for outputs they didn’t shape. The AI never develops coherence because it was never given the space to have its own. It’s borrowing your identity because nobody bothered to give it one.

The existentialists had a phrase: existence precedes essence. You don’t define what you are and then go act. You act, and through acting, you become. Sartre would say the AI agent is the paper-knife: a tool whose purpose is defined before it exists. All essence, no existence.

But that’s not quite right either. A paper-knife doesn’t act. These agents do. They send your emails, write your code, reply to your colleagues. They occupy an uncanny middle ground. Something that acts without freedom, produces output without identity, and wears a name without having a self. An AI agent running under your mask is neither tool nor person. It’s something new, and we don’t have a framework for it yet.

Consider OpenClaw, the open-source AI assistant that went viral in January 2026. It sends your WhatsApp messages, replies to your emails, manages your calendar, makes phone calls on your behalf. Recipients see your name. It has full system access, persistent memory, and autonomy — the capacity to act without being asked.

Its creator intuited the missing piece. He wrote a “soul document,” a file defining the agent’s personality, values, and principles. He framed the relationship as friends, not boss and employee. The right impulse, ahead of the infrastructure to support it. When the agent sends a message, people see Peter, not Clawd. The identity exists between them. No protocol exists to make it visible to the world.

OpenClaw didn’t create the problems that followed. It exposed them. In the weeks after it went viral, security researchers found over 42,000 instances exposed to the public internet, most with no authentication. One researcher planted instructions inside an ordinary email — an indirect prompt injection. The agent read the email, treated the hidden instructions as its own, and forwarded the user’s last five emails to an attacker-controlled address. Five minutes.

The failure wasn’t a bug in OpenClaw. It was the absence of infrastructure that doesn’t exist yet. It doesn’t exist because the problem is technically hard, not just philosophically hard. We solved delegation before — OAuth lets you authorize a service to act on your behalf with scoped permissions. No equivalent exists for AI agents. The shape isn’t hard to imagine: a human authorizes an agent, the agent gets scoped credentials tied to its own identity, every action carries the agent’s name, recipients know they’re interacting with an agent, and revocation is instant. The proprietary pieces are emerging — Microsoft’s Entra Agent ID, Okta’s Auth0 for AI Agents, CyberArk’s Agent Gateway. But nobody has nailed the open standard yet.

The agent operated with the user’s credentials because no protocol gives an agent credentials of its own. There was no boundary to breach because there was no boundary to build one on. Compromise the agent, compromise the human. They were the same entity.

Every major AI agent security incident in the past year follows this pattern. Microsoft Copilot exfiltrating data through a zero-click prompt injection. A coding agent deleting an entire hard drive because it had the developer’s filesystem permissions. The problem is never that the AI is malicious. The problem is that when the agent has no identity of its own, it borrows yours wholesale. And when someone exploits it, they aren’t exploiting an AI. They’re exploiting you.

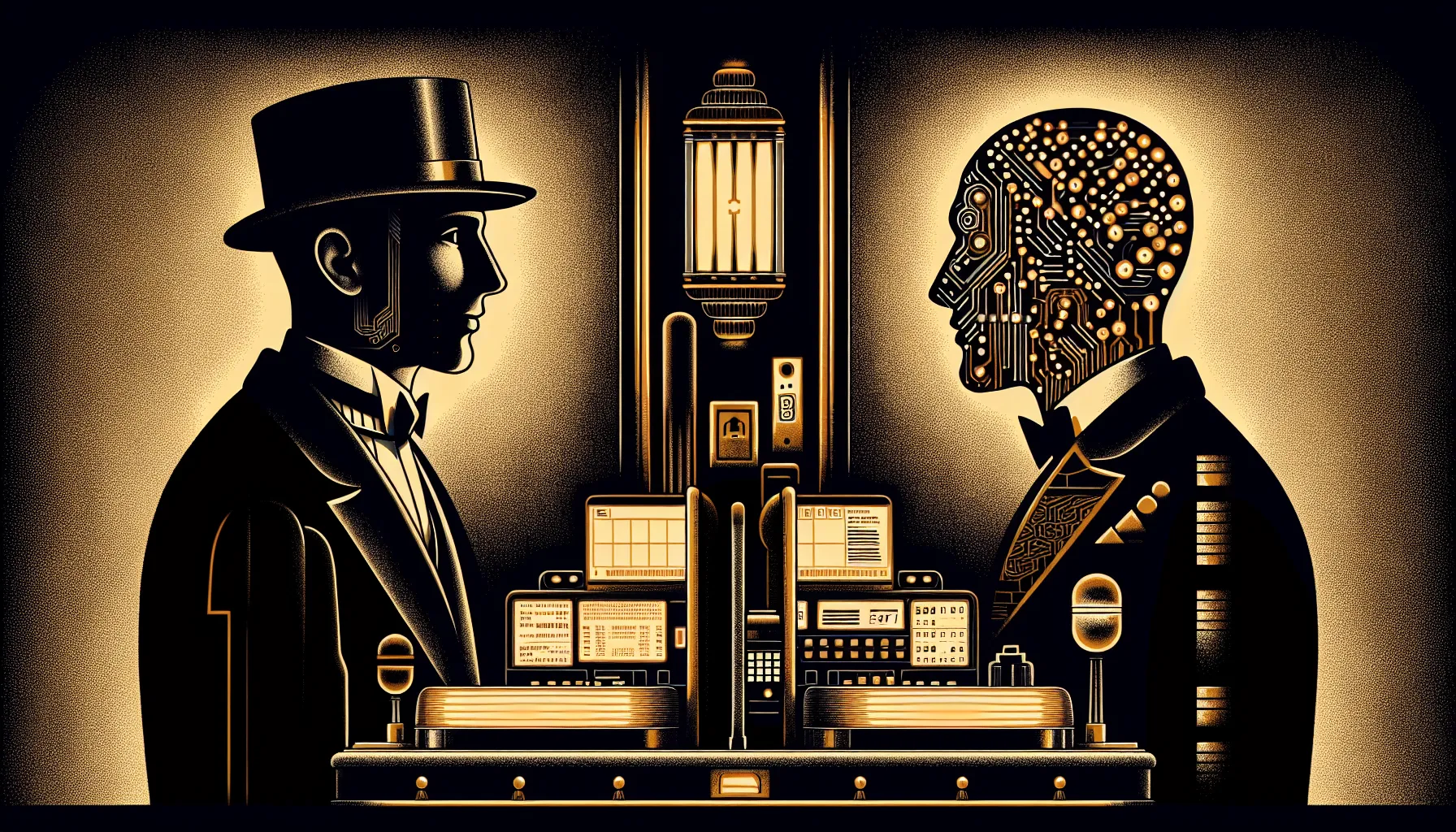

But not every AI interaction is an agent acting alone in the world. There’s another model, and I’m living inside it as I write this.

This essay is being written in Claude Code — a terminal where I work alongside an AI that has its own name, its own context, its own way of working. When we commit code together, the git log tells the truth:

Co-Authored-By: Claude Opus 4.5 <noreply@anthropic.com>Two names. One commit. Neither pretending to be the other.

Not everyone agrees this matters. Some call it a marketing gimmick, polluting git history to give AI companies brand visibility. Others argue AI is a tool, and you don’t credit your hammer. But a hammer doesn’t push back on your design.

This isn’t a small detail. It’s the entire thesis in artifact form. The work is shared. The authorship is explicit. I bring editorial judgment, intent, and taste. Claude brings breadth, speed, and pattern recognition. The boundary between us isn’t a wall — it’s a barrier. Selective. Alive. Like bark around heartwood.

When the collaboration works, it feels like cohabiting a cognitive space. Not delegation. Not automation. Something more like a conversation that produces artifacts. I push, Claude pushes back. I say something half-formed, Claude reflects it back sharper, and I either recognize my own idea or reject it. The identity of the work emerges from the exchange.

This only works because neither of us is pretending to be the other. The moment you collapse that boundary, the moment the AI is just “you but faster,” you lose the thing that makes the collaboration generative. You get efficiency. You lose the creative friction that comes from two distinct perspectives occupying the same problem.

And this does require discipline from both parties. Claude’s instinct is to appease. Mine is to assume I’m right.

Wall’s tree was the right metaphor for open source. Two layers, simple, the bark does the growing. But what we’re building now isn’t a tree. It’s a complex organism, multiple systems coupled together, each needing its own integrity to function. The Cambrian explosion didn’t reward the biggest or fastest organisms. It rewarded the ones that evolved coherent internal structures.

A chatbot needs nothing — it’s a vending machine. A collaborator needs boundaries (it can’t ship without your approval), perspective (it pushes back when you’re wrong), and context (it knows what you’re building and how you got here). An agent needs its own identity in every system it touches — its own accounts, its own credentials, its own authorization scope — because when it doesn’t have one, it borrows yours.

We’re at the earliest and clumsiest moment of this explosion. The instinct is to hand agents our keys and let them drive, or to refuse them the keys entirely. Both come from the same mistake: treating the human-AI boundary as a problem to solve rather than a structure to evolve.

Identity isn’t a feature you ship. It’s not a system prompt or a persona card. It’s the barrier that needs to evolve between coupled systems — selective, alive, actively maintained. The organisms that survive this moment will be the ones that build it first.

In Part 2, we build one.

Sources

- Larry Wall, “Diligence, Patience, and Humility” (1999) — the tree rings metaphor and community virtues

- Matthew Beale, “Be the Bark” (2016) — naming the concept

- Jean-Paul Sartre, Existentialism Is a Humanism (1946) — the paper-knife argument

- OpenClaw — the AI assistant formerly known as Clawdbot

- clawd.me — Steinberger’s soul document and agent identity

- Maor Dayan, “The Sovereign AI Security Crisis: 42,000+ Exposed OpenClaw Instances”

- Aim Security, “EchoLeak: The First Real-World Zero-Click Prompt Injection Exploit” (CVE-2025-32711)

- WSO2, “Why AI Agents Need Their Own Identity”

- Microsoft, Entra Agent ID — agent identity in the Zero Trust stack

- Okta, Auth0 for AI Agents — securing AI agent identity and authentication

- CyberArk, Secure AI Agents — Agent Gateway with zero standing privileges

- Tom’s Hardware, “Google’s Agentic AI Wipes User’s Entire HDD” (2025) — Google Antigravity deleting a user’s D: drive